Product docs and API reference are now on Akamai TechDocs.

Search product docs.

Search for “” in product docs.

Search API reference.

Search for “” in API reference.

Search Results

results matching

results

No Results

Filters

Deploy Apache Kafka through the Linode Marketplace

Quickly deploy a Compute Instance with many various software applications pre-installed and ready to use.

Apache Kafka is a robust, scalable, and high-performance system for managing real-time data streams. Its versatile architecture and feature set make it an essential component for modern data infrastructure, supporting a wide range of applications, from log aggregation to real-time analytics and more. Whether you are building data pipelines, event-driven architectures, or stream processing applications, Kafka provides a reliable foundation for your data needs.

Our marketplace application allows the deployment of a Kafka cluster using Kafka’s native consensus protocol, KRaft. There are a few things to highlight from our deployment:

- While provisioning, the cluster will be configured with mTLS for authentication. This means that inter-broker communication as well as client authentication is established via certificate identity.

- The minimum cluster size is 3. At all times, 3 controllers are configured in the cluster for fault-tolerance.

- Clients that connect to the cluster need their own valid certificate. All certificates are signed with a self-signed Certificate Authority (CA). Client keystores and truststore are found on the first Kafka node in

/etc/kafka/ssl/keystoreand/etc/kafka/ssl/truststoreonce the deployment is complete. - The CA key and certificate pair are on the first Kafka node in

/etc/kafka/ssl/caonce the deployment is complete.

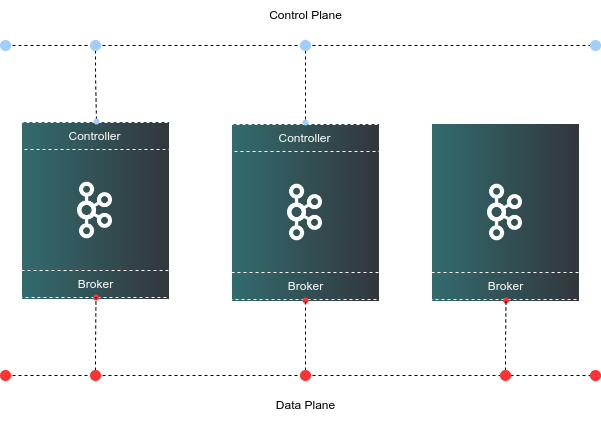

Cluster Deployment Architecture

Deploying a Marketplace App

The Linode Marketplace lets you easily deploy software on a Compute Instance using Cloud Manager. See Get Started with Marketplace Apps for complete steps.

Log in to Cloud Manager and select the Marketplace link from the left navigation menu. This displays the Linode Create page with the Marketplace tab pre-selected.

Under the Select App section, select the app you would like to deploy.

Complete the form by following the steps and advice within the Creating a Compute Instance guide. Depending on the Marketplace App you selected, there may be additional configuration options available. See the Configuration Options section below for compatible distributions, recommended plans, and any additional configuration options available for this Marketplace App.

Click the Create Linode button. Once the Compute Instance has been provisioned and has fully powered on, wait for the software installation to complete. If the instance is powered off or restarted before this time, the software installation will likely fail.

To verify that the app has been fully installed, see Get Started with Marketplace Apps > Verify Installation. Once installed, follow the instructions within the Getting Started After Deployment section to access the application and start using it.

Configuration Options

- Supported distributions: Ubuntu 22.04 LTS

- Suggested minimum plan: All plan types and sizes can be used depending on your storage needs.

Kafka Options

- Linode API Token: The provisioner node uses an authenticated API token to create the additional components to the cluster. This is required to fully create the Kafka cluster.

Limited Sudo User

You need to fill out the following fields to automatically create a limited sudo user, with a strong generated password for your new Compute Instance. This account will be assigned to the sudo group, which provides elevated permissions when running commands with the sudo prefix.

Limited sudo user: Enter your preferred username for the limited user. No Capital Letters, Spaces, or Special Characters.

Locating The Generated Sudo Password A password is generated for the limited user and stored in a

.credentialsfile in their home directory, along with application specific passwords. This can be viewed by running:cat /home/$USERNAME/.credentialsFor best results, add an account SSH key for the Cloud Manager user that is deploying the instance, and select that user as an

authorized_userin the API or by selecting that option in Cloud Manager. Their SSH pubkey will be assigned to both root and the limited user.Disable root access over SSH: To block the root user from logging in over SSH, select Yes. You can still switch to the root user once logged in, and you can also log in as root through Lish.

Accessing The Instance Without SSH If you disable root access for your deployment and do not provide a valid Account SSH Key assigned to theauthorized_user, you will need to login as the root user via the Lish console and runcat /home/$USERNAME/.credentialsto view the generated password for the limited user.Number of clients connecting to Kafka: The number of clients that will be connecting to the cluster. The application will create SSL certificates for your client that need to connect to the cluster. This should be an integer equal or greater than 1.

Kafka cluster size: The size of the Kafka cluster. One of 3, 5 or 7 instances.

Country or Region: Enter the country or region for you or your organization.

State or Province: Enter the state or province for you or your organization.

Locality: Enter the town or other locality for you or your organization.

Organization: Enter the name of your organization.

Email Address: Enter the email address you wish to use for your certificate file.

") within any of the App-specific configuration fields, including user and database password fields. This special character may cause issues during deployment.Getting Started After Deployment

Obtain Keystore and Truststore

Once the deployment is complete, obtain your client certificates from the first Kafka node. Authenticating clients requires a keystore and truststore. Access the first Kafka server and obtain your client keystores from /etc/kafka/ssl/keystore and /etc/kafka/ssl/truststore. Client certificates start with the word “client” and a number which depends on the number of clients you wanted, for example, client1 and client2.

We suggest transferring the client certificates to the Kafka consumer/producers using a secure method such as SSH or an encrypted HTTPS web UI.

Authentication

Once you’ve copied over your keystores and truststores to your client, your client applications(s) will need the password to the keystore and truststore. The credentials can be found in the home directory of the sudo user created on deployment: /home/$SUDO_USER/.credentials. For example, if you created a user called admin, the credentials file will be found in /home/admin/.credentials.

Testing

You can run a quick test from any of the Kafka nodes using Kafka’s utilities found in /etc/kafka/bin.

Create a file called

client.propertieswith the following content:- File: client.properties

1 2 3 4 5security.protocol=SSL ssl.truststore.location=/etc/kafka/ssl/truststore/server.truststore.jks ssl.truststore.password=CHANGE-ME ssl.keystore.location=/etc/kafka/ssl/keystore/client1.keystore.jks ssl.keystore.password=CHANGE-ME

Make sure that you update the values marked with CHANGE-ME with your own.

Create a topic to test the connection and authentication:

/etc/kafka/bin/kafka-topics.sh --create --topic test-ssl --bootstrap-server kafka3:9092 --command-config client.propertiesThis results in the following output:

Created topic test-ssl.Once the topic is created, you can publish a message to this topic as a producer:

echo "Kafka rocks!" | /etc/kafka/bin/kafka-console-producer.sh --topic test-ssl --bootstrap-server kafka3:9092 --producer.config client.propertiesYou can read the message as a consumer by issuing the following:

/etc/kafka/bin/kafka-console-consumer.sh --topic test-ssl --from-beginning --bootstrap-server kafka3:9092 --consumer.config client.propertiesThis results in the following output:

Kafka rocks!

Software Included

The Apache Kafka Marketplace App installs the following software on your Linode:

| Software | Version | Description |

|---|---|---|

| Apache Kafka | 3.7.0 | Scalable, high-performance, fault-tolerant streaming processing application |

| KRaft | Kafka native consensus protocol | |

| UFW | 0.36.1 | Uncomplicated Firewall |

| Fail2ban | 0.11.2 | Brute force protection utility |

More Information

You may wish to consult the following resources for additional information on this topic. While these are provided in the hope that they will be useful, please note that we cannot vouch for the accuracy or timeliness of externally hosted materials.

This page was originally published on